Vercel ARR hit $340M, the 5 Moats That Matter in the AI Era

The core judgment is simple: Is it hard to do, or hard to get?

Vercel and the “Shovel-Seller” Play in the AI Era

As a platform providing the infrastructure to build, deploy, and host web apps and AI agents, Vercel is the quintessential “shovel-seller” story of our time.

According to CEO Guillermo Rauch, Vercel has become a primary beneficiary of the Claude Code explosion. Its popularity within the developer ecosystem has naturally positioned Vercel as the default deployment tool recommended by Claude. We are only seeing the tip of the iceberg:

While Vercel customers using Claude represent just over 1% of the total user base, they already account for nearly 15% of all deployments.

Simultaneously, deployments generated by AI Agents are skyrocketing—climbing from roughly 5% in June 2025 to over 21% by February 2026. Within this “agentic” segment, nearly 70% of deployments originate from Claude Code.

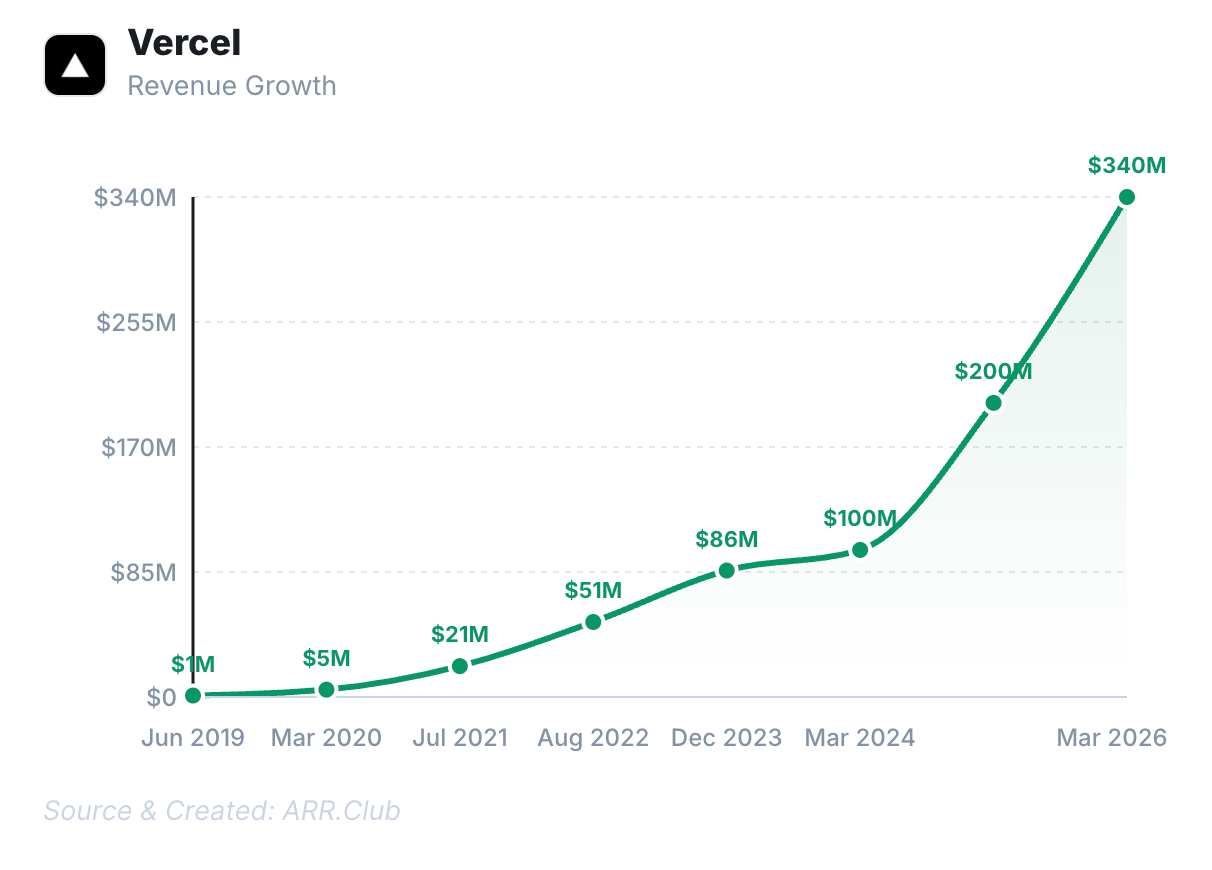

This massive surge in Vibe Coding has propelled Vercel’s ARR past the $340M mark. After crossing the $100M milestone, the company’s growth rate has actually accelerated year-over-year.

Last September, the company closed a $300 million funding round led by Accel and GIC, boosting Vercel’s valuation to $9.3 billion—a nearly three-fold jump from $32.5 billion just a year prior. Rauch’s ultimate vision? To make the “one-person unicorn” a tangible reality.

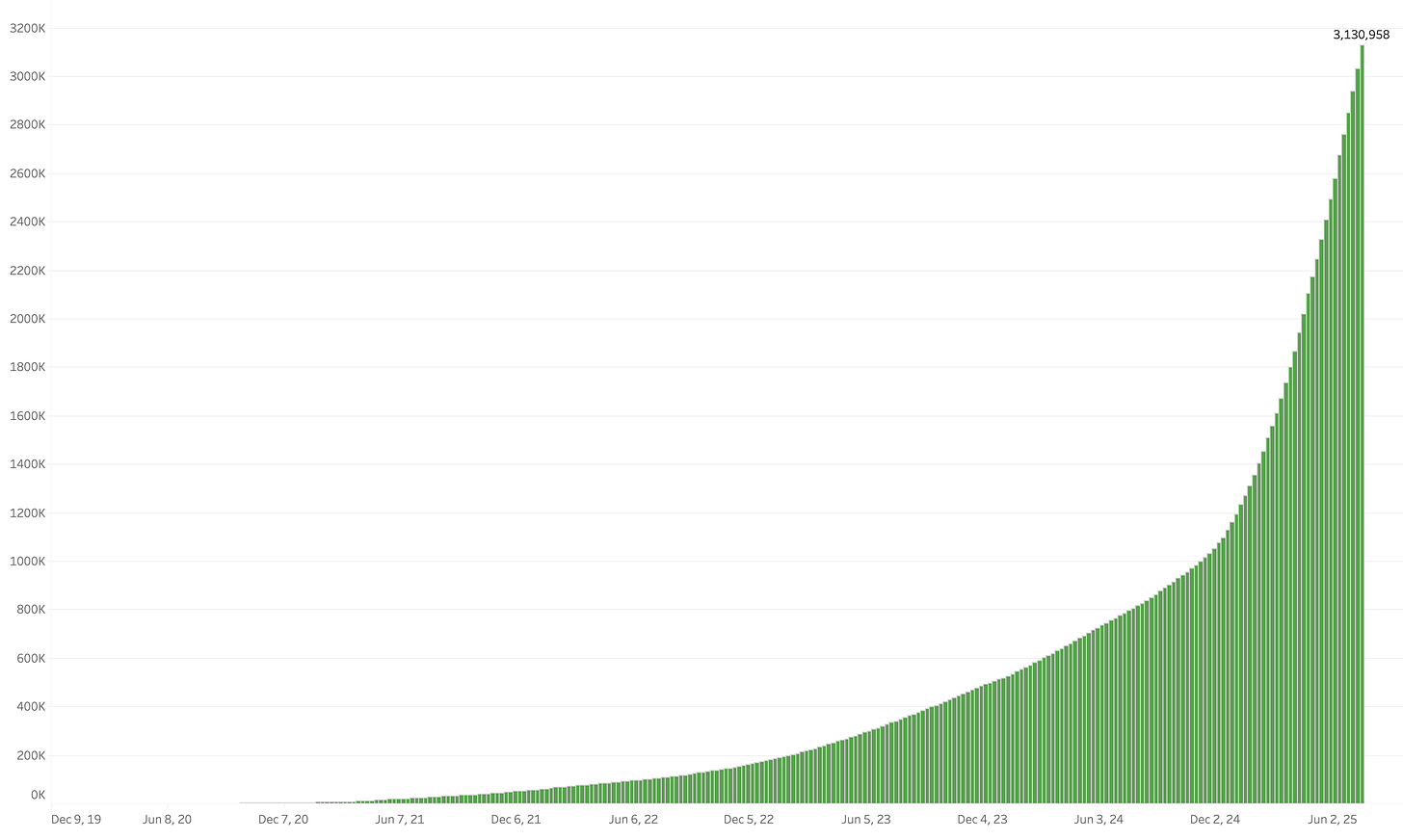

Other AI infrastructures also exploded, such as Supabase, which has become a foundational piece of the AI coding stack.

In 2025, its developer base grew from 1M to 2M in four months, then to 3M just three months later. Nearly every “vibe coding” product leverages it as part of their GTM motion.

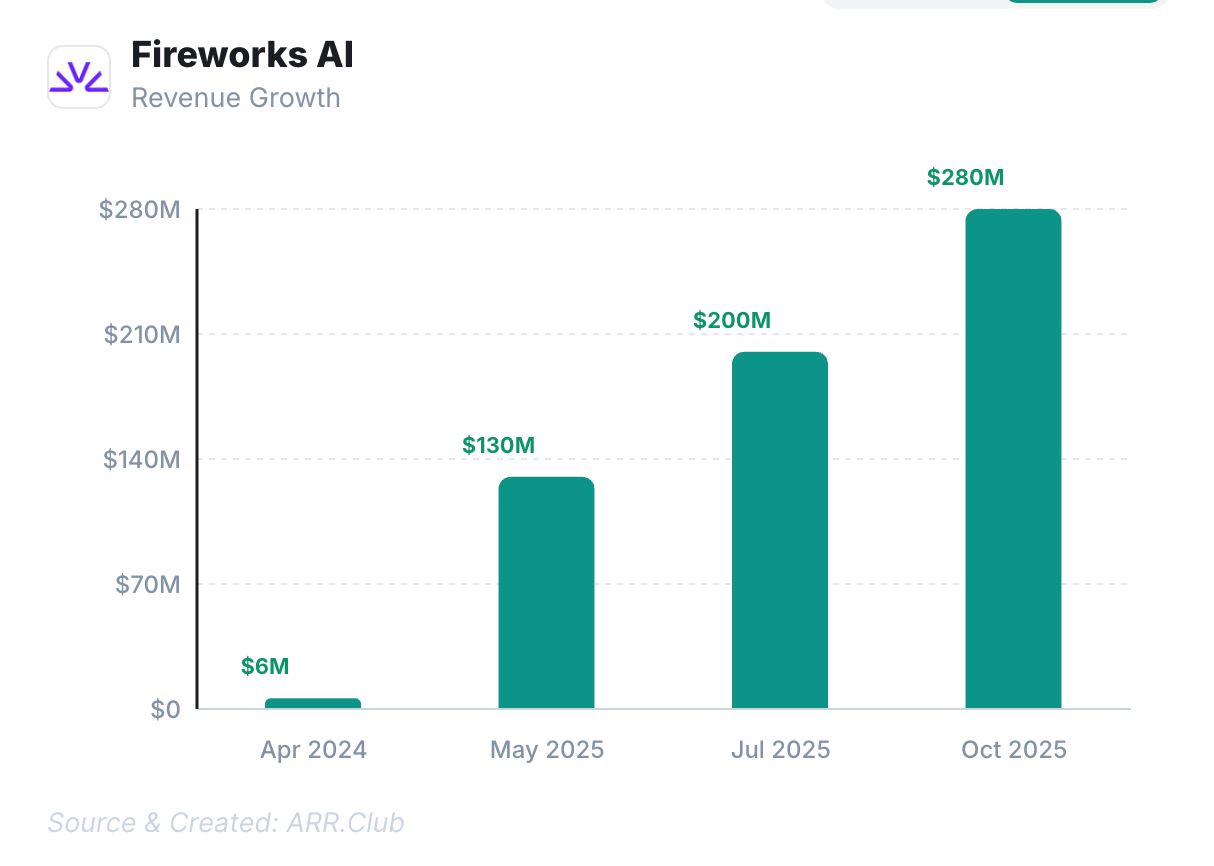

Fireworks, positioned as a GenAI platform-as-a-service, crossed $130M ARR in May 2025, hit $200M by July, and surpassed $280M by Oct, 2025.

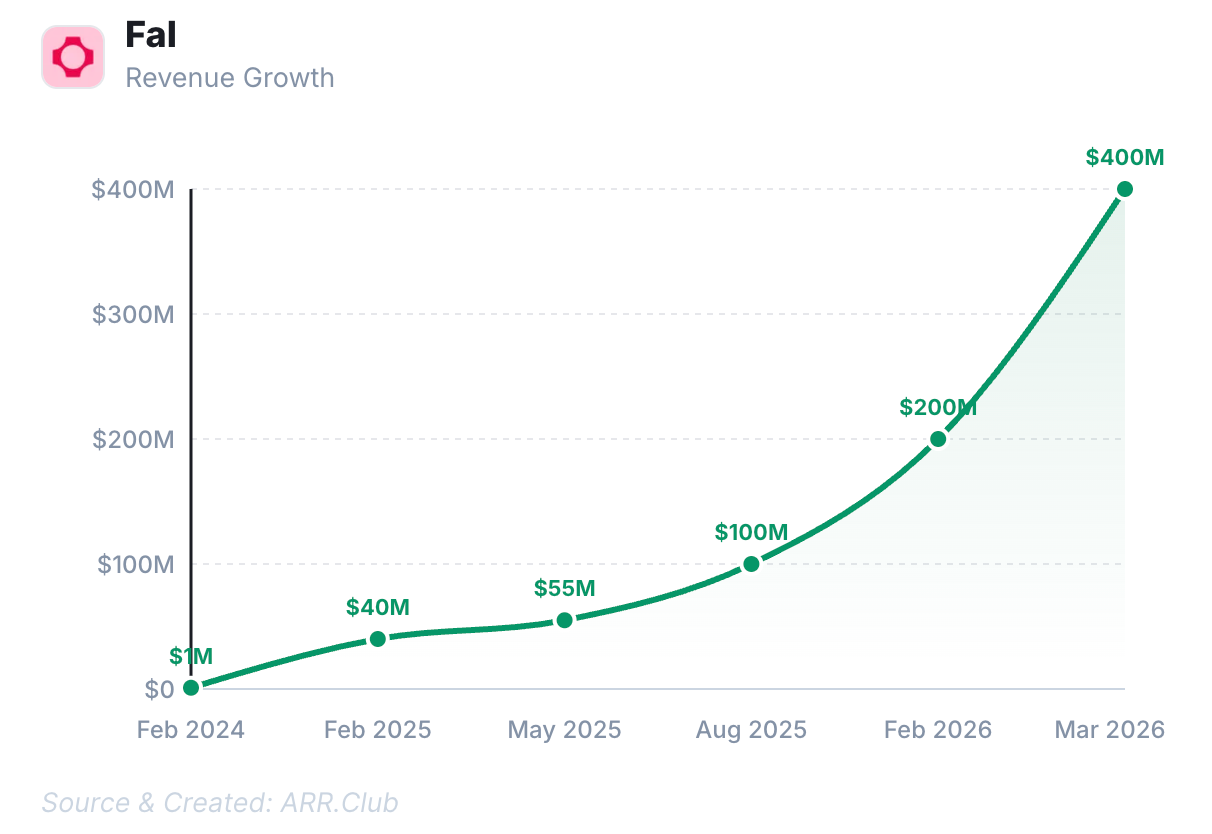

Fal, a generative media platform, grew from $1M ARR to $40M earlier in 2025, then quickly hit $100M ARR in Aug 2025, and now it has hit $400M in ARR.

The 5 Moats That Matter in the AI Era

In a recent piece, Michael Bloch, partner at Quiet Capital, argued that in the age of AI, only five moats truly hold water:

Compounding proprietary data, Network effects, Regulatory permission, Capital at scale, and Physical infrastructure.

Michael notes that many skeptics doubt “intelligence” will become 100x faster, stronger, and cheaper. The reality? It will, and it’s happening fast.

Moats have always been built on two pillars: things that are hard to do and things that are hard to get. AI is rapidly democratizing the former. Building software, maintaining complex integrations, or embedding a product so deeply into a customer’s workflow that it takes a year to rip out—these used to be “hard to do.” Now, they are becoming trivial.

Conversely, owning 10 million users, a government license, a chip fab, or $1 billion in deployable capital—these remain “hard to get.” AI compresses the “time to do,” but it cannot compress the “time for things to happen.” This distinction has become his most critical filter for investment.

Regarding compounding proprietary data, the real moat is “living data”—proprietary information generated through operations that already possess their own moats. While network effects and regulatory licenses are well-understood, the scale of capital is a factor almost everyone underestimates.

The endgame is the “Physical World.” A chip fab costs $20 billion; a nuclear power plant costs $10 billion; satellite constellations cost even more. This is why Elon Musk, while musing that money might not matter in 15 years, is simultaneously raising $75 billion and pushing SpaceX toward an IPO.

When the bottleneck shifts from software to “atoms,” the ability to raise and deploy capital at a massive scale becomes a terminal advantage. Capital here isn’t just cash—it’s institutional trust, a proven track record, and relationships built over decades.

Designing a system with AI might take a week, but you cannot manufacture, install, and connect thousands of physical devices in a week. The laws of physics set a floor on time that intelligence cannot bypass. Those who start building first establish a lead that widens over time. This is why physical infrastructure is one of the most vital moats of the AI era.

The assets that grow stronger as AI evolves share a common trait: they require “real-world time” to accumulate—a dimension no amount of intelligence can compress. Network density requires years of user adoption; regulatory approval requires years of political maneuvering; infrastructure requires years of construction; data requires years of compounding; and capital relationships require decades of trust.

Time that cannot be parallelized is the “meta-moat” behind these five categories.

I find Michael’s framework incredibly compelling. The core judgment is simple: Is it hard to do, or hard to get? If your moat is limited by “intelligence,” its collapse is only a matter of time. But if your moat is limited by “time” itself—by human behavior, physical laws, political will, or capital—then you are likely building something built to last.